Reading view

ReMarkable Paper Pure vs. Amazon Kindle Scribe: I've written on both E Ink tablets - this one wins

Lost your Roku remote? Here are four ways you can still control your TV

Ivanti EPMM CVE-2026-6973 RCE Under Active Exploitation Grants Admin-Level Access

PCPJack Credential Stealer Exploits 5 CVEs to Spread Worm-Like Across Cloud Systems

Hundreds of readers bought this E Ink tablet - and I highly recommend it

60% of MD5 password hashes are crackable in under an hour

After using Lenovo's $2,600 Yoga, I'm taking premium Windows laptops seriously again

Whoop vs. Fitbit Air: I compared Google's new fitness band to the industry favorite

One Click, Total Shutdown: The "Patient Zero" Webinar on Killing Stealth Breaches

PAN-OS RCE Exploit Under Active Use Enabling Root Access and Espionage

10 secret Netflix codes I use to find hidden movies (and how to enter them) - it's easy

An AI security auditor that red-teams PRs to find exploits, not just patterns (open-source + Ollama support)

Hey everyone,

I’ve been working on an experiment in AI-driven application security called SentinAI. I’m a backend engineer in fintech, and I spent part of my recent leave trying to explore a simple question:

Most SAST tools are basically metal detectors:

they’re great at catching obvious patterns like unsafe functions or missing headers.

But they struggle with the stuff that actually matters in real systems:

- IDORs

- authorization drift

- multi-tenant isolation issues

- broken middleware assumptions

- cross-file logic flaws

Attackers don’t think in patterns.

They think in systems.

So I built something experimental to explore that gap.

🧠 The Architecture (3-Agent Loop)

Instead of a single LLM prompt (which tends to hallucinate easily), SentinAI uses a structured multi-agent flow:

1. The Architect

Maps the system:

- routes

- auth boundaries

- data flows

- trust assumptions

2. The Adversary 🥷

Tries to break it:

- generates exploit paths

- builds step-by-step attack chains

- simulates real-world abuse scenarios

3. The Guardian 🛡️

Validates everything:

- checks exploits against actual code context

- verifies whether attacks are truly possible

- filters hallucinated or low-confidence outputs

Anything below a confidence threshold (~40%) is dropped.

The goal is not to “find everything.”

It’s to only surface things that are actually exploitable.

💡 What surprised me

A few things stood out while building this:

- Most real vulnerabilities only appear at interaction points between files, not within a single file

- LLMs are surprisingly good at generating attack paths, but unreliable without a validation layer

- The hardest problem wasn’t detection — it was noise control

- Without a “Guardian” layer, the system becomes mostly hallucinated security reports very quickly

🔒 Privacy / Local-first design

Coming from fintech, sending proprietary code to external APIs is not acceptable.

So SentinAI is built to run:

- fully local via Ollama

- or inside a private VPC

- with no code leaving the environment

🌐 Web3 expansion (experimental)

I expanded it beyond Web2 into smart contract security:

- Solana: missing signer checks, PDA misuse

- EVM: reentrancy, tx.origin issues

- Move: resource lifecycle bugs

Total coverage: ~45 vulnerability patterns.

🚧 Open questions (honest part)

I’m still actively figuring out:

- how to reduce hallucinated exploit paths at scale

- whether multi-agent reasoning actually holds up on large, messy codebases

- where the boundary is between “useful security reasoning” and “LLM storytelling”

- whether this can realistically outperform hybrid static analysis + human review

One thing I’ve already noticed:

That’s still an open problem.

🧪 Why I’m sharing this

This started as a “leave experiment” and somehow got ~200+ organic npm installs without any promotion.

I cleaned it up and open-sourced it mainly to:

- get feedback from people deeper in security engineering

- understand where this approach fails in real-world systems

- see if “AI attacker reasoning” is actually useful in practice

🔗 If you want to poke at it

Curious to hear honest thoughts from people here:

- Where would this completely break in real codebases?

- Is multi-agent security reasoning actually useful, or just a fancy abstraction over static + LLM prompts?

- Has anyone tried something similar in production security pipelines?

[link] [comments]

Now Available: Use ChatGPT with McAfee to Spot Scams Faster

Scam messages are getting smarter and faster.

According to McAfee’s 2026 State of the Scamiverse report, Americans now spend 114 hours a year trying to figure out what’s real and what’s fake online. That’s nearly three full workweeks lost to second-guessing messages, alerts, and links.

And when scams do succeed, they move quickly. The typical scam unfolds in about 38 minutes, leaving little room for hesitation.

That creates a gap: People want to check before they act, but the tools haven’t always met them in that moment.

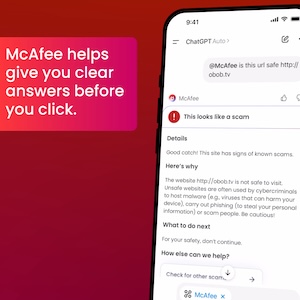

ChatGPT + McAfee is designed to close that gap, bringing scam detection directly to a platform people are already using to ask questions and make decisions.

And it’s available to anyone. You don’t have to be a McAfee subscriber.

This isn’t just detection. It’s guidance in the exact moment you’re deciding what to do.

Instead of guessing, you can paste a message or drop in a screenshot and get a clear explanation of what’s risky, and what to do next, powered by McAfee’s threat intelligence.

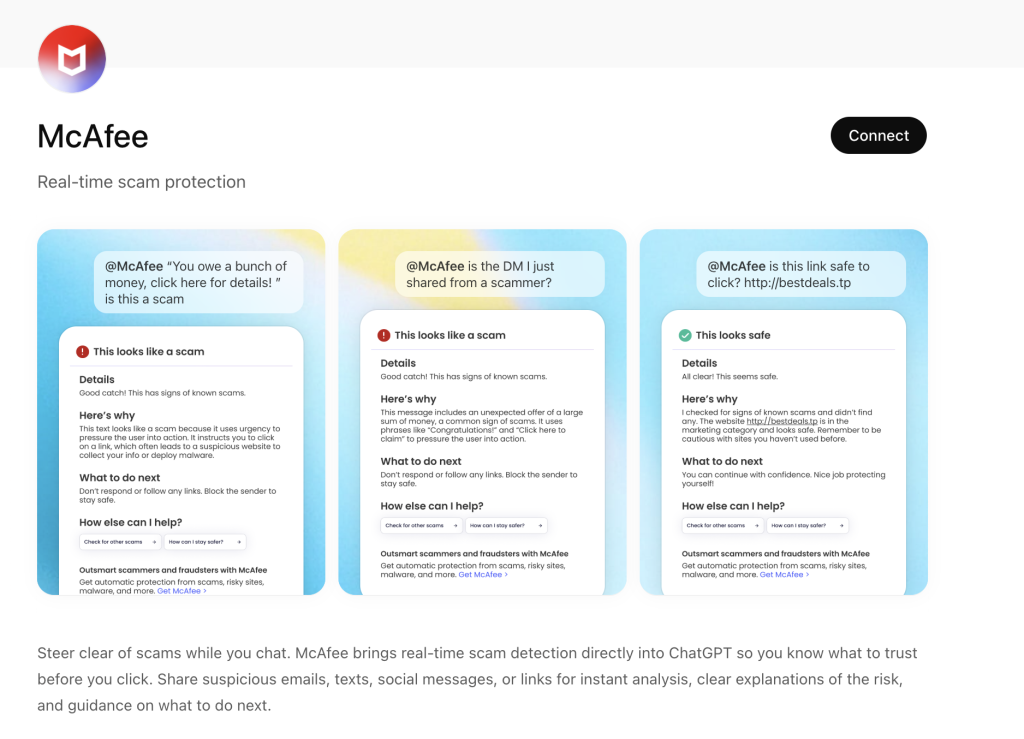

What You Can Do with ChatGPT + McAfee

With this integration, checking something suspicious becomes as simple as asking a question.

Paste a message. Drop in a link. Upload a screenshot.

McAfee analyzes it and explains what’s going on clearly and in context.

Here’s how it works:

| Feature | What it does | How it protects you |

| Link safety check | Paste a suspicious URL and get a reputational analysis based on McAfee threat intelligence | Scam links are often designed to look legitimate. A quick check helps avoid phishing and malware |

| Message analysis | Submit texts, emails, or social messages for evaluation | Many scams now rely on urgency and tone. Analysis helps surface subtle red flags |

| Screenshot uploads | Upload screenshots of messages, emails, or posts for review | Scams don’t always come as clean text. This makes it easier to check what you’re actually seeing |

| Clear explanations | Get a breakdown of why something is flagged as risky or safe | Not just a warning—an explanation that helps you recognize patterns next time |

| Guided next steps | Receive recommendations on what to do next | Helps prevent escalation, especially in moments of uncertainty |

It’s a quick, accessible way to get answers in the moment. But it’s just one part of a broader system designed to protect you more comprehensively.

Add the app to your ChatGPT account here.

Built on McAfee’s Threat Intelligence

Behind the scenes, ChatGPT + McAfee is powered by the same intelligence that fuels McAfee’s broader scam protection ecosystem.

When you submit something for review:

- Links are checked against known threat signals

- Messages are analyzed for scam patterns and language cues

- Results are translated into clear, human-readable explanations

The goal isn’t just to flag risk. It’s to help you understand it.

A New Way to Stay Ahead of Scams

Scams aren’t slowing down. If anything, they’re becoming more convincing, more personalized, and harder to detect.

That’s where ChatGPT + McAfee comes in. But this is only one part of a much bigger system designed to protect you before, during, and after a scam attempt.

With McAfee+ Advanced, multiple layers work together so you’re not left figuring it out after the damage is done:

- Identity Monitoring alerts you if your personal info shows up where it should not, so you can act fast

- Personal Data Cleanup helps remove your information from sites selling it.

- Scam Detector flags suspicious texts, emails, links, QR codes, and even deepfake videos before you engage

- Safe Browsing helps block risky sites, even if you do accidentally click

- Device Security helps detect malicious apps or downloads

- Secure VPN keeps your data private, especially on public Wi-Fi

The ChatGPT experience gives you a fast, intuitive way to check something in the moment.

McAfee+ Advanced makes sure you’re protected across everything else.

The post Now Available: Use ChatGPT with McAfee to Spot Scams Faster appeared first on McAfee Blog.

ThreatsDay Bulletin: Edge Plaintext Passwords, ICS 0-Days, Patch-or-Die Alerts and 25+ New Stories

Thousands of Vibe-Coded Apps Expose Corporate and Personal Data on the Open Web