The Bumblebee malware loader seemingly vanished from the internet last October, but it's back and - oddly - relying on a vintage vector to try and gain access.…

One hundred and fifty people who worked for the Australian Taxation Office (ATO) have been investigated – and some prosecuted – for participating in a tax refund scam promoted on Facebook and TikTok.…

Patch Tuesday Microsoft fixed 73 security holes in this February's Patch Tuesday, and you better get moving because two of the vulnerabilities are under active attack.…

Updated A single packet can exhaust the processing capacity of a vulnerable DNS server, effectively disabling the machine, by exploiting a 20-plus-year-old design flaw in the DNSSEC specification.…

Microsoft Corp. today pushed software updates to plug more than 70 security holes in its Windows operating systems and related products, including two zero-day vulnerabilities that are already being exploited in active attacks.

Top of the heap on this Fat Patch Tuesday is CVE-2024-21412, a “security feature bypass” in the way Windows handles Internet Shortcut Files that Microsoft says is being targeted in active exploits. Redmond’s advisory for this bug says an attacker would need to convince or trick a user into opening a malicious shortcut file.

Researchers at Trend Micro have tied the ongoing exploitation of CVE-2024-21412 to an advanced persistent threat group dubbed “Water Hydra,” which they say has being using the vulnerability to execute a malicious Microsoft Installer File (.msi) that in turn unloads a remote access trojan (RAT) onto infected Windows systems.

The other zero-day flaw is CVE-2024-21351, another security feature bypass — this one in the built-in Windows SmartScreen component that tries to screen out potentially malicious files downloaded from the Web. Kevin Breen at Immersive Labs says it’s important to note that this vulnerability alone is not enough for an attacker to compromise a user’s workstation, and instead would likely be used in conjunction with something like a spear phishing attack that delivers a malicious file.

Satnam Narang, senior staff research engineer at Tenable, said this is the fifth vulnerability in Windows SmartScreen patched since 2022 and all five have been exploited in the wild as zero-days. They include CVE-2022-44698 in December 2022, CVE-2023-24880 in March 2023, CVE-2023-32049 in July 2023 and CVE-2023-36025 in November 2023.

Narang called special attention to CVE-2024-21410, an “elevation of privilege” bug in Microsoft Exchange Server that Microsoft says is likely to be exploited by attackers. Attacks on this flaw would lead to the disclosure of NTLM hashes, which could be leveraged as part of an NTLM relay or “pass the hash” attack, which lets an attacker masquerade as a legitimate user without ever having to log in.

“We know that flaws that can disclose sensitive information like NTLM hashes are very valuable to attackers,” Narang said. “A Russian-based threat actor leveraged a similar vulnerability to carry out attacks – CVE-2023-23397 is an Elevation of Privilege vulnerability in Microsoft Outlook patched in March 2023.”

Microsoft notes that prior to its Exchange Server 2019 Cumulative Update 14 (CU14), a security feature called Extended Protection for Authentication (EPA), which provides NTLM credential relay protections, was not enabled by default.

“Going forward, CU14 enables this by default on Exchange servers, which is why it is important to upgrade,” Narang said.

Rapid7’s lead software engineer Adam Barnett highlighted CVE-2024-21413, a critical remote code execution bug in Microsoft Office that could be exploited just by viewing a specially-crafted message in the Outlook Preview pane.

“Microsoft Office typically shields users from a variety of attacks by opening files with Mark of the Web in Protected View, which means Office will render the document without fetching potentially malicious external resources,” Barnett said. “CVE-2024-21413 is a critical RCE vulnerability in Office which allows an attacker to cause a file to open in editing mode as though the user had agreed to trust the file.”

Barnett stressed that administrators responsible for Office 2016 installations who apply patches outside of Microsoft Update should note the advisory lists no fewer than five separate patches which must be installed to achieve remediation of CVE-2024-21413; individual update knowledge base (KB) articles further note that partially-patched Office installations will be blocked from starting until the correct combination of patches has been installed.

It’s a good idea for Windows end-users to stay current with security updates from Microsoft, which can quickly pile up otherwise. That doesn’t mean you have to install them on Patch Tuesday. Indeed, waiting a day or three before updating is a sane response, given that sometimes updates go awry and usually within a few days Microsoft has fixed any issues with its patches. It’s also smart to back up your data and/or image your Windows drive before applying new updates.

For a more detailed breakdown of the individual flaws addressed by Microsoft today, check out the SANS Internet Storm Center’s list. For those admins responsible for maintaining larger Windows environments, it often pays to keep an eye on Askwoody.com, which frequently points out when specific Microsoft updates are creating problems for a number of users.

Network-attached storage (NAS) specialist QNAP has disclosed and released fixes for two new vulnerabilities, one of them a zero-day discovered in early November.…

Updated Canada's Trans-Northern Pipelines has allegedly been infiltrated by the ALPHV/BlackCat ransomware crew, which claims to have stolen 190 GB of data from the oil distributor.…

Hi,

How would I build a query to filter by source or destination subnet in chronicle, i'm guessing the only way to do this is via regex but I cannot get it to work, is this possible in Chronicle?

The number of senior business executives stymied by an ongoing phishing campaign continues to rise with cybercriminals registering hundreds of cloud account takeovers (ATOs) since spinning it up in November.…

AI has made the dating scene. In a big way. Nearly one in four Americans say they’ve spiced up their online dating photos and content with artificial intelligence (AI) tools. Yet that might do more harm than good, as 64% of people also said that they wouldn’t trust a love interest who used AI-generated photos in their profiles.

That’s only two of the findings from this year’s Modern Love research. Our second annual study surveyed 7,000 people in seven countries to discover how AI and the internet are changing love and relationships. And it should come as no surprise that AI has ushered in several hefty changes.

In all, we found that mixing love and AI has its ups and downs. For one, people cite how effective AI is. Almost 7 in 10 people said they got more interest and better responses using AI-generated content than their own. However, people also said they didn’t like receiving AI-coded sentiments. Some 57% said they’d be hurt or offended if they found out their Valentine’s message was written by AI.

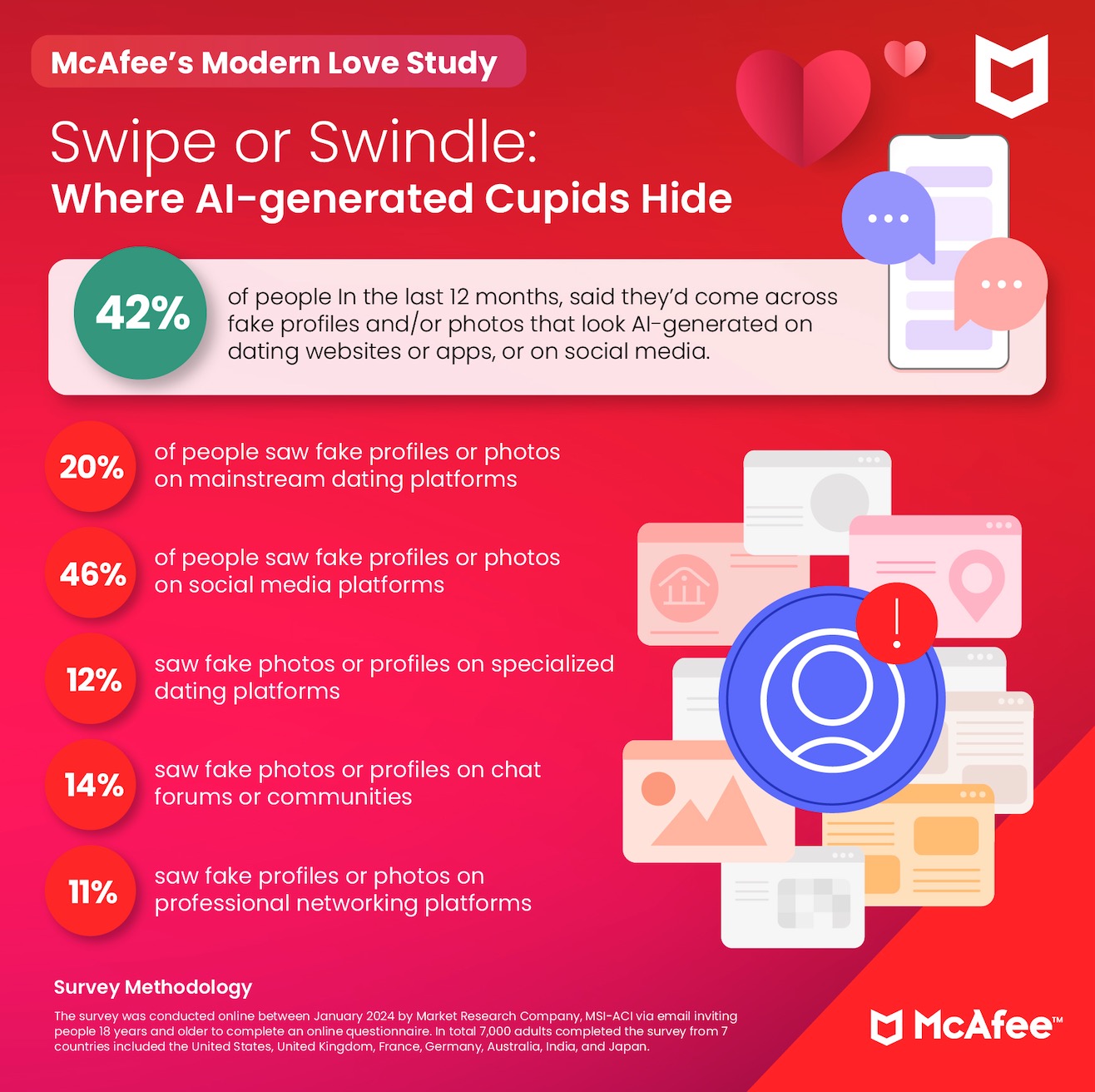

The tricky part is this — people still find it tough to spot AI content. Only 24% of people said they were sure they could tell if a message or love letter was written by an AI tool like ChatGPT. Still, 42% said they saw fake profiles or photos on dating sites, apps, and social media in the past year.

Moreover, two-thirds of people said that they’re more concerned about phony AI-created content now than they were a year ago. As further findings from McAfee Labs show, those concerns have their roots in reality.

Without question, the rise of powerful AI tools has complicated the online dating landscape. In particular, AI has made it easier for romance scammers to trick people looking for love online. They can ramp up their scams more quickly and with more sophistication than ever before.

In fact, the McAfee Labs team has seen an increase in Valentine’s campaign themes, including malware campaigns, malicious URLs, and a variety of spam and scams. They expect these numbers will continue to rise as February 14 gets closer. Since late January, our Labs team has uncovered that:

These findings fall right in line with what online daters told us. Nearly one-third of Americans said that an online love interest turned out to be a scammer. Another 14% said they discovered an interest was an AI-bot and not a real person.

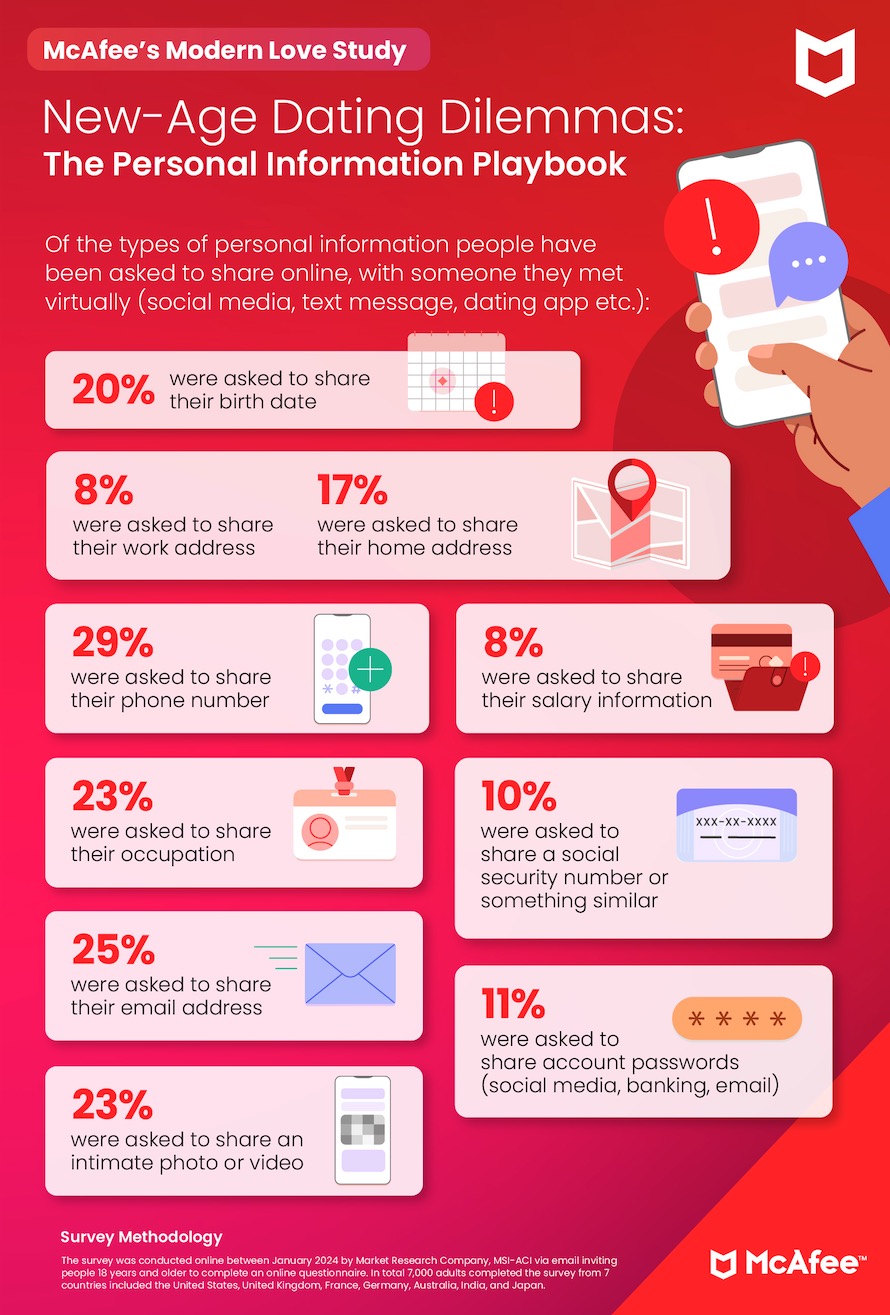

What’s at stake in these scams? Money, personal info, and sometimes both.

While many romance scammers make initial contact with their victims on dating websites and apps, they quickly move the conversation elsewhere, such as chat apps like WhatsApp and Telegram. In other cases, they move to texts. This gives scammers an advantage, as many dating platforms have fraud detection measures in place. And it’s here where romance scammers commit theft and fraud.

Large, organized crime operations run many romance scams. Moving the conversation from a dating site or app is often a sign that the victim has been “passed along” to a senior scammer who excels at extracting payments and personal info from victims. People shared the top types of info that scammers tried to tease out of them:

In a dating pool filled with an increasing number of scams and AI content, online daters find themselves doing some detective work.

Our study found that 38% of people said they used reverse image search on profile pictures of people they’ve met on social media or dating sites. Another 60% of respondents said they often use social media to dig into the background of their potential partners. As a result:

And rounding out those findings, 11% said they discovered something else entirely — that their potential special person was already in a relationship.

Online dating has always called for a bit of caution. Now with AI hitting the dating scene, it calls for a little skepticism, if not a little detective work. That, in combination with the right tools to protect your privacy, identity, and personal info, can mean the difference between a budding relationship or heartbreak — whether that’s financial, emotional, or both. The following steps can help:

The past year has brought plenty of change to online dating. People now use AI to pepper up their dating profiles and pics, compose love notes, or come up with a few lines for the inside of a card. Likewise, scammers have welcomed AI just as warmly. They use it to fuel content and chats that swindle victims looking for love, backed by sophisticated and large-scale operations that run like a business.

Yet today’s online daters still have what it takes to spot a fake. They have several tools and protections available to them, many powered by AI that can help them steer clear of heartbreak, both the financial and emotional kind. That, along with a mix of healthy skepticism and detective work, they can still date online with confidence, even as AI continues to make its way onto the dating scene.

Survey Methodology

The survey was conducted online between January 2024 by Market Research Company, MSI-ACI via email inviting people 18 years and older to complete an online questionnaire. In total 7,000 adults completed the survey from 7 countries included the United States, United Kingdom, France, Germany, Australia, India, and Japan.

The post Love Bytes – How AI is shaping Modern Love appeared first on McAfee Blog.

Meta has acknowledged that phone number reuse that allows takeovers of its accounts "is a concern," but the ad biz insists the issue doesn't qualify for its bug bounty program and is a matter for telecom companies to sort out.…

Indian tech services giant Infosys has been named as the source of a data leak suffered by the Bank of America.…

Deep fakes are a growing concern in the age of digital media and can be extremely dangerous for school children. Deep fakes are digital images, videos, or audio recordings that have been manipulated to look or sound like someone else. They can be used to spread misinformation, create harassment, and even lead to identity theft. With the prevalence of digital media, it’s important to protect school children from deep fakes.

Educating students on deep fakes is an essential step in protecting them from the dangers of these digital manipulations. Schools should provide students with information about the different types of deep fakes and how to spot them.

Media literacy is an important skill that students should have in order to identify deep fakes and other forms of misinformation. Schools should provide students with resources to help them understand how to evaluate the accuracy of a digital image or video.

Schools should emphasize the importance of digital safety and provide students with resources on how to protect their online identities. This includes teaching students about the risks of sharing personal information online, using strong passwords, and being aware of phishing scams.

Schools should monitor online activity to ensure that students are not exposed to deep fakes or other forms of online harassment. Schools should have policies in place to protect students from online bullying and harassment, and they should take appropriate action if they find any suspicious activity.

By following these tips, schools can help protect their students from the dangers of deep fakes. Educating students on deep fakes, encouraging them to be media literate, promoting digital safety, and monitoring online activity are all important steps to ensure that school children are safe online.

Through quipping students with the tools they need to navigate the online world, schools can also help them learn how to use digital technology responsibly. Through educational resources and programs, schools can teach students the importance of digital citizenship and how to use digital technology ethically and safely. Finally, schools should promote collaboration and communication between parents, students, and school administration to ensure everyone is aware of the risks of deep fakes and other forms of online deception.

Deep fakes have the potential to lead to identity theft, particularly if deep fakes tools are used to steal the identities of students or even teachers. McAfee’s Identity Monitoring Service, as part of McAfee+, monitors the dark web for your personal info, including email, government IDs, credit card and bank account info, and more. We’ll help keep your personal info safe, with early alerts if your data is found on the dark web, so you can take action to secure your accounts before they’re used for identity theft.

The post How to Protect School Children From Deep Fakes appeared first on McAfee Blog.

With the rise of artificial intelligence (AI) and machine learning, concerns about the privacy of personal data have reached an all-time high. Generative AI is a type of AI that can generate new data from existing data, such as images, videos, and text. This technology can be used for a variety of purposes, from facial recognition to creating “deepfakes” and manipulating public opinion. As a result, it’s important to be aware of the potential risks that generative AI poses to your privacy.

In this blog post, we’ll discuss how to protect your privacy from generative AI.

Generative AI is a type of AI that uses existing data to generate new data. It’s usually used for things like facial recognition, speech recognition, and image and video generation. This technology can be used for both good and bad purposes, so it’s important to understand how it works and the potential risks it poses to your privacy.

Generative AI can be used to create deepfakes, which are fake images or videos that are generated using existing data. This technology can be used for malicious purposes, such as manipulating public opinion, identity theft, and spreading false information. It’s important to be aware of the potential risks that generative AI poses to your privacy.

Generative AI uses existing data to generate new data, so it’s important to be aware of what data you’re sharing online. Be sure to only share data that you’re comfortable with and be sure to use strong passwords and two-factor authentication whenever possible.

There are a number of privacy-focused tools available that can help protect your data from generative AI. These include tools like privacy-focused browsers, VPNs, and encryption tools. It’s important to understand how these tools work and how they can help protect your data.

It’s important to stay up-to-date on the latest developments in generative AI and privacy. Follow trusted news sources and keep an eye out for changes in the law that could affect your privacy.

By following these tips, you can help protect your privacy from generative AI. It’s important to be aware of the potential risks that this technology poses and to take steps to protect yourself and your data.

Of course, the most important step is to be aware and informed. Research and organizations that are using generative AI and make sure you understand how they use your data. Be sure to read the terms and conditions of any contracts you sign and be aware of any third parties that may have access to your data. Additionally, be sure to look out for notifications of changes in privacy policies and take the time to understand any changes that could affect you.

Finally, make sure to regularly check your accounts and reports to make sure that your data is not being used without your consent. You can also take the extra step of making use of the security and privacy features available on your device. Taking the time to understand which settings are available, as well as what data is being collected and used, can help you protect your privacy and keep your data safe.

This blog post was co-written with artificial intelligence (AI) as a tool to supplement, enhance, and make suggestions. While AI may assist in the creative and editing process, the thoughts, ideas, opinions, and the finished product are entirely human and original to their author. We strive to ensure accuracy and relevance, but please be aware that AI-generated content may not always fully represent the intent or expertise of human-authored material.

The post How to Protect Your Privacy From Generative AI appeared first on McAfee Blog.

AI scams are becoming increasingly common. With the rise of artificial intelligence and technology, fraudulent activity is becoming more sophisticated and sophisticated. As a result, it is becoming increasingly important for families to be aware of the dangers posed by AI scams and to take steps to protect themselves.

By taking these steps, you can help protect your family from AI scams. Educating yourself and your family about the potential risks of AI scams, monitoring your family’s online activity, using strong passwords, installing anti-virus software, and checking your credit report regularly can help keep your family safe from AI scams.

No one likes to be taken advantage of or scammed. By being aware of the potential risks of AI scams, you protect your family from becoming victims.

In addition, it is important to be aware of emails or texts that appear to be from legitimate sources but are actually attempts to entice you to click on suspicious links or provide personal information. If you receive a suspicious email or text, delete it immediately. If you are unsure, contact the company directly to verify that the message is legitimate. By being aware of potential AI scams keep your family safe from financial loss or identity theft.

You can also take additional steps to protect yourself and your family from AI scams. Consider using two-factor authentication when logging in to websites or apps, and keep all passwords and usernames secure. Be skeptical of unsolicited emails or texts never provide confidential information unless you are sure you know who you are dealing with. Finally, always consider the source and research any unfamiliar company or service before you provide any personal information. By taking these steps, you can help to protect yourself and your family from the dangers posed by AI scams.

monitor your bank accounts and credit reports to ensure that no unauthorized activity is taking place. Set up notifications to alert you of any changes or suspicious activity. Make sure to update your security software to the latest version and be aware of phishing attempts, which could be attempts to gain access to your personal information. If you receive a suspicious email or text, do not click on any links and delete the message immediately.

Finally, stay informed and know the signs of scam. Be your online accounts and look out for any requests for personal information. If something looks suspicious, trust your instincts and don’t provide any information. Report any suspicious activity to the authorities and make sure to spread the word to others from falling victim to AI scams.

This blog post was co-written with artifical intelligence (AI) as a tool to supplement, enhance, and make suggestions. While AI may assist in the creative and editing process, the thoughts, ideas, opinions, and the finished product are entirely human and original to their author. We strive to ensure accuracy and relevance, but please be aware that AI-generated content may not always fully represent the intent or expertise of human-authored material.

The post How to Protect Your Family From AI Scams appeared first on McAfee Blog.

Some smart folks have found a way to automatically unscramble documents encrypted by the Rhysida ransomware, and used that know-how to produce and release a handy recovery tool for victims.…

Updated Dutch health insurers are reportedly forcing breast cancer patients to submit photos of their breasts prior to reconstructive surgery despite a government ban on precisely that.…

The FCC's updated reporting requirements mean telcos in America will have just seven days to officially disclose that a criminal has broken into their systems.…

Willis Lease Finance Corporation has admitted to US regulators that it fell prey to a "cybersecurity incident" after data purportedly stolen from the biz was posted to the Black Basta ransomware group's leak blog.…

The Caravan and Motorhome Club (CAMC) and the experts it drafted to help clean up the mess caused by a January cyberattack still can't figure out whether members' data was stolen.…