This article originally appeared in The Domain Name Industry Brief (Volume 18, Issue 3)

Earlier this year, the Internet Engineering Task Force’s (IETF’s) Internet Engineering Steering Group (IESG) announced that several Proposed Standards related to the Registration Data Access Protocol (RDAP), including three that I co-authored, were being promoted to the prestigious designation of Internet Standard. Initially accepted as proposed standards six years ago, RFC 7480, RFC 7481, RFC 9082 and RFC 9083 now comprise the new Standard 95. RDAP allows users to access domain registration data and could one day replace its predecessor the WHOIS protocol. RDAP is designed to address some widely recognized deficiencies in the WHOIS protocol and can help improve the registration data chain of custody.

In the discussion that follows, I’ll look back at the registry data model, given the evolution from WHOIS to the RDAP protocol, and examine how the RDAP protocol can help improve upon the more traditional, WHOIS-based registry models.

In 1998, Network Solutions was responsible for providing both consumer-facing registrar and back-end registry functions for the legacy .com, .net and .org generic top-level domains (gTLDs). Network Solutions collected information from domain name registrants, used that information to process domain name registration requests, and published both collected data and data derived from processing registration requests (such as expiration dates and status values) in a public-facing directory service known as WHOIS.

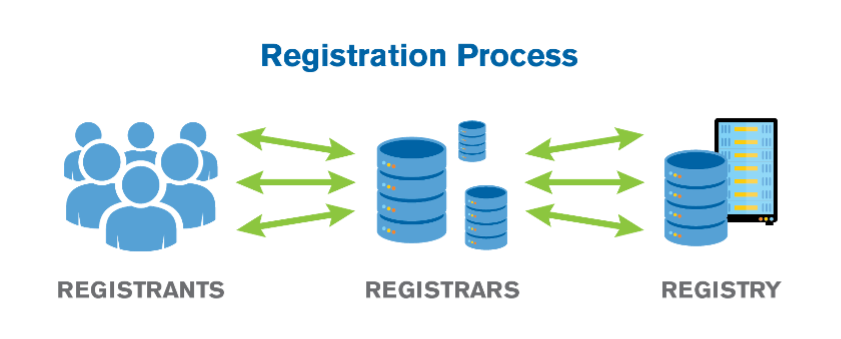

From Network Solution’s perspective as the registry, the chain of custody for domain name registration data involved only two parties: the registrant (or their agent) and Network Solutions. With the introduction of a Shared Registration System (SRS) in 1999, multiple registrars began to compete for domain name registration business by using the registry services operated by Network Solutions. The introduction of additional registrars and the separation of registry and registrar functions added parties to the chain of custody of domain name registration data. Information flowed from the registrant, to the registrar, and then to the registry, typically crossing multiple networks and jurisdictions, as depicted in Figure 1.

Over time, new gTLDs and new registries came into existence, new WHOIS services (with different output formats) were launched, and countries adopted new laws and regulations focused on protecting the personal information associated with domain name registration data. As time progressed, it became clear that WHOIS lacked several needed features, such as:

The IETF made multiple attempts to add features to WHOIS to address some of these issues, but none of them were widely adopted. A possible replacement protocol known as the Internet Registry Information Service (IRIS) was standardized in 2005, but it was not widely adopted. Something else was needed, and the IETF went back to work to produce what became known as RDAP.

RDAP was specified in a series of five IETF Proposed Standard RFC documents, including the following, all of which were published in March 2015:

Only when RDAP was standardized did we start to see broad deployment of a possible WHOIS successor by domain name registries, domain name registrars and address registries.

The broad deployment of RDAP led to RFCs 7480 and 7481 becoming Internet Standard RFCs (part of Internet Standard 95) without modification in March 2021. As operators of registration data directory services implemented and deployed RDAP, they found places in the other specifications where minor corrections and clarifications were needed without changing the protocol itself. RFC 7482 was updated to become Internet Standard RFC 9082, which was published in June 2021. RFC 7483 was updated to become Internet Standard RFC 9083, which was also published in June 2021. All were added to Standard 95. As of the writing of this article, RFC 7484 is in the process of being reviewed and updated for elevation to Internet Standard status.

Operators of registration data directory services who implemented RDAP can take advantage of key features not available in the WHOIS protocol. I’ve highlighted some of these important features in the table below.

| RDAP Feature | Benefit |

| Standard, well-understood, and widely available HTTP transport | Relatively easy to implement, deploy and operate using common web service tools, infrastructure and applications. |

| Securable via HTTPS | Helps provide confidentiality for RDAP queries and responses, reducing the amount of information that is disclosed to monitors. |

| Structured output in JavaScript Object Notation (JSON) | JSON is well-understood and tool friendly, which makes it easier for clients to parse and format responses from all servers without the need for software that’s customized for different service providers. |

| Easily extensible | Designed to support the addition of new features without breaking existing implementations. This makes it easier to address future function needs with less risk of implementation incompatibility. |

| Internationalized output, with full support for Unicode character sets | Allows implementations to provide human-readable inputs and outputs that are represented in a language appropriate to the local operating environment. |

| Referral capability, leveraging HTTP constructs | Provides information to software clients that allow the client to retrieve additional information from other RDAP servers. This can be used to hide complexity from human users. |

| Support of standardized authentication | RDAP can take full advantage of all of the client identification, authentication and authorization methods that are available to web services. This means that RDAP can be used to provide the basic framework for differentiated access to registration data based on attributes associated with the user and the user’s query. |

Verisign’s RDAP service, which was originally launched as an experimental implementation several years before gaining widespread adoption, allows users to look up records in the registry database for all registered .com, .net, .name, .cc and .tv domain names. It also supports Internationalized Domain Names (IDNs).

We at Verisign were pleased not only to see the IETF recognize the importance of RDAP by elevating it to an Internet Standard, but also that the protocol became a requirement for ICANN-accredited registrars and registries as of August 2019. Widespread implementation of the RDAP protocol makes registration data more secure, stable and resilient, and we are hopeful that the community will evolve the prescribed implementation of RDAP such that the full power of this rich protocol will be deployed.

You can learn more in the RDAP Help section of the Verisign website, and access helpful documents such as the RDAP technical implementation guide and the RDAP response profile.

The post Industry Insights: RDAP Becomes Internet Standard appeared first on Verisign Blog.

This is the final in a multi-part series on cryptography and the Domain Name System (DNS).

In previous posts in this series, I’ve discussed a number of applications of cryptography to the DNS, many of them related to the Domain Name System Security Extensions (DNSSEC).

In this final blog post, I’ll turn attention to another application that may appear at first to be the most natural, though as it turns out, may not always be the most necessary: DNS encryption. (I’ve also written about DNS encryption as well as minimization in a separate post on DNS information protection.)

In 2014, the Internet Engineering Task Force (IETF) chartered the DNS PRIVate Exchange (dprive) working group to start work on encrypting DNS queries and responses exchanged between clients and resolvers.

That work resulted in RFC 7858, published in 2016, which describes how to run the DNS protocol over the Transport Layer Security (TLS) protocol, also known as DNS over TLS, or DoT.

DNS encryption between clients and resolvers has since gained further momentum, with multiple browsers and resolvers supporting DNS over Hypertext Transport Protocol Security (HTTPS), or DoH, with the formation of the Encrypted DNS Deployment Initiative, and with further enhancements such as oblivious DoH.

The dprive working group turned its attention to the resolver-to-authoritative exchange during its rechartering in 2018. And in October of last year, ICANN’s Office of the CTO published its strategy recommendations for the ICANN-managed Root Server (IMRS, i.e., the L-Root Server), an effort motivated in part by concern about potential “confidentiality attacks” on the resolver-to-root connection.

From a cryptographer’s perspective the prospect of adding encryption to the DNS protocol is naturally quite interesting. But this perspective isn’t the only one that matters, as I’ve observed numerous times in previous posts.

A common theme in this series on cryptography and the DNS has been the question of whether the benefits of a technology are sufficient to justify its cost and complexity.

This question came up not only in my review of two newer cryptographic advances, but also in my remarks on the motivation for two established tools for providing evidence that a domain name doesn’t exist.

Recall that the two tools — the Next Secure (NSEC) and Next Secure 3 (NSEC3) records — were developed because a simpler approach didn’t have an acceptable risk / benefit tradeoff. In the simpler approach, to provide a relying party assurance that a domain name doesn’t exist, a name server would return a response, signed with its private key, “<name> doesn’t exist.”

From a cryptographic perspective, the simpler approach would meet its goal: a relying party could then validate the response with the corresponding public key. However, the approach would introduce new operational risks, because the name server would now have to perform online cryptographic operations.

The name server would not only have to protect its private key from compromise, but would also have to protect the cryptographic operations from overuse by attackers. That could open another avenue for denial-of-service attacks that could prevent the name server from responding to legitimate requests.

The designers of DNSSEC mitigated these operational risks by developing NSEC and NSEC3, which gave the option of moving the private key and the cryptographic operations offline, into the name server’s provisioning system. Cryptography and operations were balanced by this better solution. The theme is now returning to view through the recent efforts around DNS encryption.

Like the simpler initial approach for authentication, DNS encryption may meet its goal from a cryptographic perspective. But the operational perspective is important as well. As designers again consider where and how to deploy private keys and cryptographic operations across the DNS ecosystem, alternatives with a better balance are a desirable goal.

In addition to encryption, there has been research into other, possibly lower-risk alternatives that can be used in place of or in addition to encryption at various levels of the DNS.

We call these techniques collectively minimization techniques.

In “textbook” DNS resolution, a resolver sends the same full domain name to a root server, a top-level domain (TLD) server, a second-level domain (SLD) server, and any other server in the chain of referrals, until it ultimately receives an authoritative answer to a DNS query.

This is the way that DNS resolution has been practiced for decades, and it’s also one of the reasons for the recent interest in protecting information on the resolver-to-authoritative exchange: The full domain name is more information than all but the last name server needs to know.

One such minimization technique, known as qname minimization, was identified by Verisign researchers in 2011 and documented in RFC 7816 in 2016. (In 2015, Verisign announced a royalty-free license to its qname minimization patents.)

With qname minimization, instead of sending the full domain name to each name server, the resolver sends only as much as the name server needs either to answer the query or to refer the resolver to a name server at the next level. This follows the principle of minimum disclosure: the resolver sends only as much information as the name server needs to “do its job.” As Matt Thomas described in his recent blog post on the topic, nearly half of all .com and .net queries received by Verisign’s .com TLD servers were in a minimized form as of August 2020.

Other techniques that are part of this new chapter in DNS protocol evolution include NXDOMAIN cut processing [RFC 8020] and aggressive DNSSEC caching [RFC 8198]. Both leverage information present in the DNS to reduce the amount and sensitivity of DNS information exchanged with authoritative name servers. In aggressive DNSSEC caching, for example, the resolver analyzes NSEC and NSEC3 range proofs obtained in response to previous queries to determine on its own whether a domain name doesn’t exist. This means that the resolver doesn’t always have to ask the authoritative server system about a domain name it hasn’t seen before.

All of these techniques, as well as additional minimization alternatives I haven’t mentioned, have one important common characteristic: they only change how the resolver operates during the resolver-authoritative exchange. They have no impact on the authoritative name server or on other parties during the exchange itself. They thereby mitigate disclosure risk while also minimizing operational risk.

The resolver’s exchanges with authoritative name servers, prior to minimization, were already relatively less sensitive because they represented aggregate interests of the resolver’s many clients1. Minimization techniques lower the sensitivity even further at the root and TLD levels: the resolver sends only its aggregate interests in TLDs to root servers, and only its interests in SLDs to TLD servers. The resolver still sends the aggregate interests in full domain names at the SLD level and below2, and may also include certain client-related information at these levels, such as the client-subnet extension. The lower levels therefore may have different protection objectives than the upper levels.

Minimization techniques and encryption together give DNS designers additional tools for protecting DNS information — tools that when deployed carefully can balance between cryptographic and operational perspectives.

These tools complement those I’ve described in previous posts in this series. Some have already been deployed at scale, such as a DNSSEC with its NSEC and NSEC3 non-existence proofs. Others are at various earlier stages, like NSEC5 and tokenized queries, and still others contemplate “post-quantum” scenarios and how to address them. (And there are yet other tools that I haven’t covered in this series, such as authenticated resolution and adaptive resolution.)

Modern cryptography is just about as old as the DNS. Both have matured since their introduction in the late 1970s and early 1980s respectively. Both bring fundamental capabilities to our connected world. Both continue to evolve to support new applications and to meet new security objectives. While they’ve often moved forward separately, as this blog series has shown, there are also opportunities for them to advance together. I look forward to sharing more insights from Verisign’s research in future blog posts.

Read the complete six blog series:

1. This argument obviously holds more weight for large resolvers than for small ones — and doesn’t apply for the less common case of individual clients running their own resolvers. However, small resolvers and individual clients seeking additional protection retain the option of sending sensitive queries through a large, trusted resolver, or through a privacy-enhancing proxy. The focus in our discussion is primarily on large resolvers.

2. In namespaces where domain names are registered at the SLD level, i.e., under an effective TLD, the statements in this note about “root and TLD” and “SLD level and below” should be “root through effective TLD” and “below effective TLD level.” For simplicity, I’ve placed the “zone cut” between TLD and SLD in this note.

The post Information Protection for the Domain Name System: Encryption and Minimization appeared first on Verisign Blog.